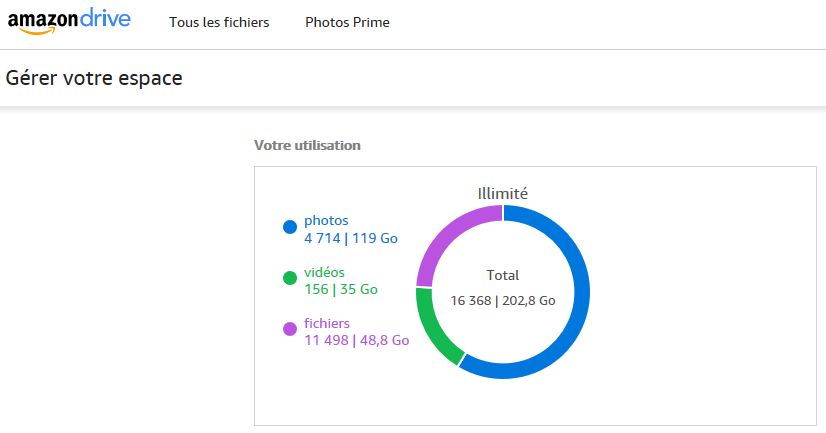

One of the most common issues in terms of online backup is the uploading speed actually available to the user (solutions like Mozy, Carbonite, Crashplan all appeared to be quite limited in their cheapest entry-level or individual tier offers; a limitation which is probably marketing-based). How much time will you need to really send tera-bytes on the chosen server? SinceI started the production use of Amazon Cloud Drive, I can give real-life figures which are quite reassuring.

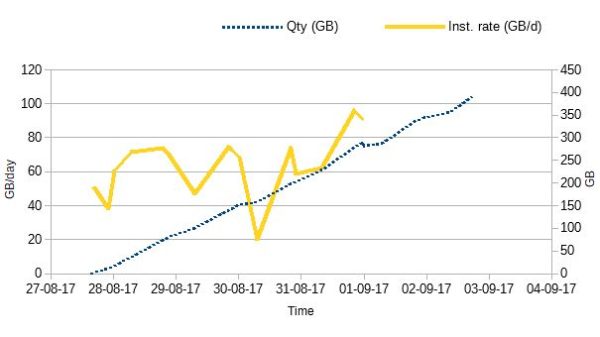

I am using an optical fiber connection (actually limiteless here) and I synchronize from a Synology DS413j which is defintely weak in CPU (a mono-core ARM). This is probably understating the actual maximum capacity of ACD. Nevertheless, I ran around 60 GB per day for several days. This can be judged on a few hundreds of giga-bytes:

Complement:

The actual transfer speed fluctuated (I was using the server in real production during the initial synchronisation, by adding and moving many files around). The most interesting is that a parameter actuall influenced the overall rate: The number of simultaneous threads/files simultaneously synchronised (no surprise here). The initial value of 3 was nearly immediately upgraded to 6 files and the rate quickly stabilized around 60 GB/day. When I climbed to 9 simultaneous files, the rate went nearly up to 90 GB/day (a little before the yellow curve stops). But moving up to 12 files did not bring any additional gain (out of the graph). You may have to experiment to find your own value (probably depending on the CPU of your Synology server and the possible limitations of your link to ACD).

Leave a Reply